You’re probably here because you typed a question like “will employers know I used AI on my resume?” into ChatGPT. Or maybe Google. You’re not alone. This is the new, anxious reality for job seekers in 2026.

AI tools like ChatGPT, Claude, and Gemini have reshaped how we approach job applications. They offer tempting shortcuts. They promise polished, professional documents. But that little voice in your head, the one asking if you’ll get caught, it’s a valid voice. Because the landscape of hiring has changed dramatically, and not always in ways the AI hype beasts want you to hear.

The Question Everyone Asks ChatGPT (And Why ChatGPT Can’t Answer It)

It’s a bit meta, isn’t it? Asking an AI tool if employers can detect AI on your resume. The irony isn’t lost on me. Millions of job seekers, maybe even you, have typed that exact query into a large language model. You get a careful, hedged answer. It talks about “best practices” and “maintaining authenticity.” Very diplomatic. Very unhelpful.

The truth is, ChatGPT has no idea if your specific resume will be detected. It can’t predict human behavior, nor can it know the specific detection software or human biases a hiring manager might encounter. It processes language. It doesn’t predict the future of your job application.

This article isn’t going to give you diplomatic, hedged answers. We’re going to look at the real data, the actual tools, and what recruiters are doing right now, in 2026. Because the honest answer is far more nuanced than the black and white narratives you see out there. It’s not about whether AI can be detected. It’s about whether it is detected, and how. There’s a difference.

You’re trying to land a job. You’re using every tool at your disposal. That’s smart. But you also need to know the risks. And how to navigate them effectively. I think many people just assume the AI tools are foolproof, and that’s a mistake. They aren’t.

So let’s cut through the noise. Let’s get down to what’s actually happening on the ground, in HR departments, with the software, and with human recruiters. You need to know this stuff. Your career depends on it.

70% of Job Seekers Now Use AI: The 2026 Reality

Let’s start with a big number. A really big number. Seventy percent. That’s the percentage of job seekers who now use generative AI in their job search. Think about that for a second. It’s a massive jump from just two years ago, when only 39% used it. This isn’t a niche trend anymore. This is mainstream. Everyone is doing it, or at least a super majority of people are.

And it’s not just for cover letters or brainstorming. Thirty one percent of candidates admit they use AI directly to generate or ‘optimize’ their CVs. That means a significant portion of the resumes floating around the internet right now, maybe even one in three, have had direct AI involvement in their creation.

The sheer volume of applications has exploded too. One open position now attracts over 1000 applications in days. Employers are drowning. They simply cannot manually review every single resume. This is exactly why AI tools became popular for applicants in the first place, right? To stand out in a sea of candidates.

But this explosion of AI use creates a new problem for employers. Ninety percent of HR managers report increased workload from AI-generated applications. They’re seeing more applications, yes, but often from unqualified candidates. Twenty three percent of employers report receiving more applications from people who simply aren’t a good fit, likely because AI made it easy for them to apply to everything.

And here’s the kicker. Twenty one percent of employers admit they struggle to distinguish AI-generated resumes from authentic ones. This means nearly one in four hiring teams can’t tell the difference. This tells you something important about the effectiveness of many detection efforts. It’s not as straightforward as the detection tool vendors want you to believe.

So, the reality is, AI is everywhere. It’s helping a lot of people. It’s also causing headaches for recruiters. And it’s made the question of detection more complicated, not less.

What AI Detection Tools Actually Look For (And What They Miss)

Okay, let’s talk about the tech. What are these AI detection tools actually trying to do? How do they work? Tools like GPTZero, Originality.ai, ZeroGPT, CopyLeaks, and Turnitin usually look for a few key things.

The main concepts are “perplexity” and “burstiness.”

- Perplexity measures how “surprised” the AI model is by the text. Human writing is often unpredictable. We use unexpected phrases. We sometimes make grammatical errors. An AI model, when generating text, tends to use predictable word sequences. Low perplexity means the text is highly predictable, which suggests AI generation.

- Burstiness refers to sentence length variation. Human writers, as I’m trying to show you right now, naturally mix short sentences with longer, more complex ones. We have bursts of simple ideas, then elaborate. AI generated text, however, often produces sentences of very similar lengths and structures. It’s less “bursty.”

Originality.ai also factors in plagiarism checks, looking for overlap with existing content on the web. Turnitin, famously used in academia, built a separate model specifically for detecting large language model output, distinct from its plagiarism detection. But these are still pattern matching systems. They aren’t mind readers.

Here’s where it gets really tricky. These tools claim high accuracy rates. We’re talking 94 to 96% accuracy, according to the vendors themselves. Those numbers sound great on a sales pitch, don’t they?

A false positive means perfectly human-written text gets flagged as AI. Imagine you wrote your resume from scratch, poured your heart into it, and a detection tool says it’s AI. That happens. A lot, actually. Some studies show these tools have a false positive rate on human-written formal text ranging from 10% to a staggering 50%, depending on the specific tool and the text being analyzed. That’s a huge problem if you’re an employer relying solely on this tech.

And there’s a deeper, more concerning bias. A Stanford 2023 study found that GPT detectors discriminate against non-native English writers. Their false positive rate for non-native English speakers was as high as 61%. This isn’t just a technical glitch. It’s an ethical issue. It means someone who carefully crafted their resume, perhaps with a slightly different sentence structure or vocabulary because English isn’t their first language, is far more likely to be unfairly flagged.

It’s also worth remembering that even OpenAI, the creator of ChatGPT, shut down their own AI Text Classifier in July 2023. Why? Because its accuracy was only about 26%. If the original creators can’t build a reliable detector, it makes you wonder about the reliability of third-party tools, doesn’t it?

What Recruiters Actually Do (Spoiler: Most Don’t Run Detection)

Alright, so we know the tools exist, and we know they have flaws. But do recruiters actually use them? This is where the rubber meets the road. And the honest answer is, for most entry to mid-level roles, probably not directly.

Remember that 21% of employers who struggle to distinguish AI-generated resumes? That means 79% of them either feel they can distinguish, or they’re just not bothering to use detection software in the first place. My gut tells me it’s more of the latter.

Recruiters are incredibly busy. They’re swamped with applications. They’re trying to fill roles quickly. Adding another layer of AI detection to their workflow, especially one known for false positives, isn’t high on their priority list. It takes time. It costs money. And it might just flag a perfectly good candidate.

Instead, recruiters rely on a mix of Applicant Tracking Systems (ATS), keyword matching, and good old human intuition. They’re looking for specific skills, experiences, and qualifications. They’re scanning for red flags, sure, but those red flags usually aren’t “this text feels like AI.” They’re more like “this person claims 10 years experience but graduated last year,” or “this resume has no measurable achievements.”

Think about it. A recruiter spends maybe 6 to 10 seconds on a resume during the initial scan. They’re looking for keywords that match the job description. They’re looking for a clear career progression. They’re looking for quantifiable results. They are not usually copy editors trying to figure out if your prose was human or machine generated.

Here’s what a real recruiter might think:

- “Does this person have the required experience in [industry name]?”

- “Do I see [specific software skill] or [specific technical skill] listed?”

- “Are there actual numbers here? Did they increase sales by 15% or just ‘improved sales’?”

- “Does this resume look professionally formatted and easy to read?” (The FreeCV builder helps here.)

They’re looking for substance, not just perfect grammar. They’re not running your resume through GPTZero. They’re running it through their own brain, quickly cross-referencing against the job description and their experience with past candidates.

Now, for very high-stakes roles, or perhaps in industries with strict compliance, maybe some companies are experimenting with detection. But for the vast majority of roles you’re applying for, the direct use of AI detection software is probably minimal. The real risk, I think, comes from a different angle entirely. It’s about how your AI-generated text feels to a human reader, not what a piece of software says.

The Real Risk: It’s Not Detection, It’s Detectability Through Pattern

This is where your anxiety should actually live. It’s not about a glowing red line appearing on a recruiter’s screen, screaming “AI detected!” That’s rare, as we’ve discussed. The real danger is much more subtle. It’s about your resume subtly signaling to a human reader that it lacks a personal touch, that it feels generic, that it’s perhaps too perfect. It’s detectability through predictable patterns.

Recruiters might not run your resume through a specific AI detection tool, but they do have an innate “AI radar” now. They’ve seen hundreds, thousands of AI-generated applications over the past year or two. They recognize the patterns, even subconsciously. It’s like spotting a tourist a mile away in your hometown. You just know.

Here are five telltale “AI resume” patterns that recruiters subconsciously notice, without needing any software:

- The “Optimal” Word Choice: AI models are trained on vast amounts of text. They learn what words sound “professional” or “corporate.” This often leads to a reliance on jargon and buzzwords. Think phrases like “spearheaded initiatives,” “fostered synergistic collaborations,” “drove impactful outcomes.” These sound impressive in isolation, but strung together, they create a hollow, generic feel. A real human often uses simpler, more direct language.

- Lack of Specificity: AI struggles with true originality and concrete detail unless explicitly prompted. If your resume talks about “significant improvements” or “various projects” without quantifiable results or specific examples, it starts to sound like AI fluff. Humans remember specifics. Machines generalize.

- Homogeneous Sentence Structure & Length: We talked about burstiness. AI generated text often has a monotonous rhythm. Every sentence seems to be about the same length. Every bullet point might start with a strong verb, which sounds good in theory, but when every single one does, it becomes predictable and loses impact. It lacks the natural ebb and flow of human speech.

- Overly Formal or “Polished” Tone: AI often defaults to an extremely formal, almost sterile tone. It removes contractions. It avoids any hint of personality or unique phrasing. While professionalism is good, a resume that is too perfect, too devoid of any human quirks, can feel off. It might not sound like you.

- Repetitive Phrasing & Redundancy: Because AI is trying to match keywords and fill space, it can sometimes rephrase the same idea multiple times using different synonyms. Or it might repeat skills or accomplishments in slightly different ways across sections. A human editor would catch this and condense it. AI, left unchecked, can be a bit verbose and redundant.

These aren’t things an AI detector is necessarily flagging. This is what a human recruiter, after reading hundreds of AI-assisted resumes, will just feel. They’ll get a sense that this resume isn’t authentic, even if they can’t pinpoint why. It’s like looking at stock photography versus a genuine portrait. One just feels real.

So, the risk isn’t a technical “AI detected” flag. It’s the human intuition that your resume sounds like it was written by a well-meaning, but ultimately impersonal, machine. And that’s often enough for them to move on to the next application.

The 4 Categories of AI Use (Only 1 Will Get You Flagged)

Not all AI use is created equal. It’s important to understand the different ways you might be using AI, because only one of them carries a significant risk of getting you flagged, either by a tool or by a recruiter’s gut feeling.

Let’s break it down into four categories:

1. AI as an Assistant (Safe)

This is the smartest, safest way to use AI. You’re still in the driver’s seat. You’re using AI for brainstorming, research, or generating ideas. Maybe you ask ChatGPT to list common skills for a “Senior Marketing Manager” role. Or you ask it to help you identify keywords from a job description. You’re using it as a thought partner, a super intelligent search engine. You then take those ideas and phrase them in your own words. This is perfectly fine. No one will know, and it&rsquot actually very helpful.

2. AI as an Editor (Safe)

This is also a highly recommended and safe use case. You write your resume yourself, in your own voice. Then you paste it into an AI tool and ask it to proofread, check for grammar, suggest clearer phrasing, or shorten sentences. It’s like having a very fast copy editor. The core content, the unique voice, the specific achievements, they all originate from you. The AI just polishes it. The 2024 University of Maryland study found that AI-generated text becomes nearly undetectable after just one round of human editing. The reverse is also true: human text polished by AI becomes more robust against detection, but still retains its human origins. This is a powerful technique.

3. AI as a Generator (Risky)

This is where things start to get dicey. This is when you give AI a few bullet points, or even just a job title and some basic information, and ask it to “write my resume.” Or “write me a professional summary for a software engineer.” The AI then generates significant portions of text, perhaps entire sections, based on its training data. This is what often leads to the “AI patterns” we just discussed: generic phrasing, lack of specificity, monotonous tone. It’s detectable by pattern, if not by software.

This is the category that accounts for the 31% of candidates using AI directly to generate CVs. It’s efficient, yes, but it often sacrifices authenticity for speed. If you do this, you absolutely must follow up with extensive human editing and customization.

4. AI as a Fabricator (Career-Ending)

This is the absolute worst way to use AI, and it carries severe consequences. This is when you ask AI to invent experiences, skills, or achievements that you don’t actually have. “Write me a bullet point about leading a project team, even though I’ve never done that.” Or “Generate a list of technical skills I should have for this job, and put them on my resume.” This is fraud. Pure and simple. Even if the AI text isn’t detected, the lies will be. In an interview, when asked to elaborate, you’ll be caught. This will get you blacklisted, not just from one company, but potentially from an entire industry. Never do this. Seriously.

The 70 to 80 Percent Match Rule: How AI Screening Actually Scores You

So, we’ve talked about detection. Now let’s talk about the other side of the AI coin: the Applicant Tracking System, or ATS. These are the unsung heroes and silent gatekeepers of modern hiring. Systems like Workday, Greenhouse, Lever, BambooHR, ICIMS, Taleo, and SmartRecruiters process millions of applications every day. And they use AI, or at least advanced algorithms, to do it.

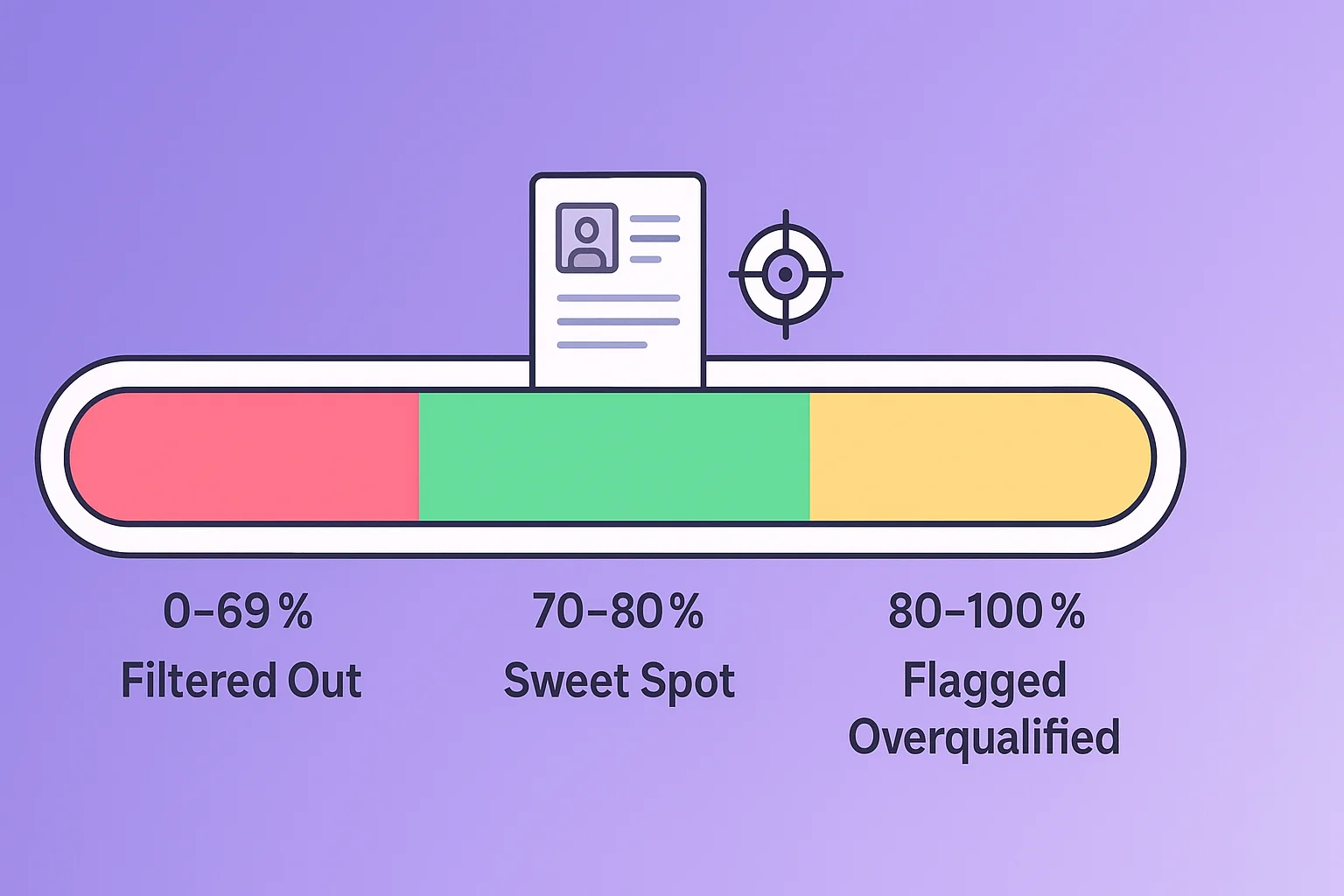

Your resume’s biggest hurdle isn’t usually an AI detection tool checking for GPT. It’s an ATS checking for relevance. It’s looking for keywords. It’s scoring your match against the job description. This is where the “sweet spot” comes in.

The ‘sweet spot’ for AI screening is when candidates match 70 to 80% of the listed requirements. This is a critical piece of information. Below 70% and you’re likely filtered out. Your resume doesn’t have enough of the right keywords, or your experience doesn’t align closely enough. Above 80%, and you risk getting flagged as overqualified, or sometimes, even as “AI-stuffed keywords.”

Why “overqualified”? Because an ATS is programmed to find the ideal candidate, not necessarily the most experienced. Someone who perfectly matches 100% of the job description might be seen as either a flight risk (they’ll get bored and leave) or someone who’s just aggressively keyword-stuffed their resume. It sounds counterintuitive, but it’s how these systems are often configured. They want a good fit, not a perfect one that might be suspicious.

This means your strategy should be to tailor your resume specifically for each job application, aiming for that 70 to 80% match. You can’t just generate one generic AI resume and send it everywhere. That’s a surefire way to get filtered out.

Tools like the FreeCV ATS checker can help you test your resume’s pass rate before you apply. You upload your resume, paste the job description, and it gives you a percentage match. This is far more important for getting past the initial hurdle than worrying about AI detection.

So, when you’re using AI, use it to help you identify those crucial keywords. Use it to phrase your experience in a way that resonates with the job description. But don’t just dump a list of AI-generated keywords into your resume. That’s what gets you flagged at the high end of the match spectrum.

It’s about strategic alignment, not just volume. You need to understand why CVs get auto-rejected and how to prevent it. AI can help you with this, but you have to guide it. You still need to be the brain. The AI is just the very fast, very eager assistant.

What Published Research Says About Detection Accuracy

Let’s zoom out for a moment and look at the broader academic and industry research. It paints a pretty consistent picture, one that contrasts sharply with the bold claims of AI detection tool vendors.

We already touched on OpenAI’s decision to shut down their own AI Text Classifier in July 2023. Their rationale was simple: it wasn’t accurate enough. Specifically, it only correctly identified AI-written text 26% of the time. If the company that created the foundational technology for these models can’t reliably detect its output, what does that say about everyone else?

Then there’s the Stanford 2023 study, which highlighted the significant bias against non-native English writers. A false positive rate of up to 61% for this demographic is simply unacceptable. This isn’t about catching cheaters. This is about unfairly penalizing a large segment of the global workforce, people who are already at a disadvantage in many job markets.

Perhaps the most reassuring piece of research for job seekers comes from a 2024 University of Maryland study. This research found that AI-generated text becomes nearly undetectable after just one round of human editing. Let that sink in. A human looking over AI output, making a few tweaks, rephrasing some sentences, injecting some personal style, is often enough to fool even sophisticated detection systems.

What does this all mean for you? It means the vendor-claimed accuracy numbers (94-96%) should be viewed with extreme skepticism. They are often based on ideal conditions, or proprietary datasets that don’t reflect the messy reality of diverse human and AI interactions with text.

Furthermore, the broader context of AI-driven fraud is increasing, which puts more pressure on hiring teams. Gartner predicts that 1 in 4 candidate profiles worldwide will be fraudulent by 2028. And InCruiter reported in 2026 that 25 to 30% of suspicious interview sessions show fraud or deepfake activity. This growing concern about fraud, however, is leading companies to invest more in identity verification and behavioral assessments, rather than just AI text detection for resumes. The focus is shifting from “did AI write this?” to “is this person real and are their claims true?” This is a crucial distinction.

So, while AI detection tools exist, their real-world accuracy is questionable, and their use in the initial resume screening process is not as widespread as you might fear. The evidence suggests that a human touch, even a brief one, can effectively mask AI origins. And employers are more concerned about outright fraud and qualification mismatches than the provenance of your phrasing.

How to Use AI on Your Resume Without Getting Flagged

Given everything we’ve discussed, how do you actually use AI smart, and safely? The goal is to get the benefits of AI without any of the risks. It’s entirely possible. Here’s a step-by-step guide:

1. Start with Your Human Core

Don’t ask AI to write your resume from scratch, at least not for the first draft. Brainstorm your achievements, skills, and experiences yourself. Write down the raw data. What did you accomplish? What numbers can you attach to those accomplishments? What unique projects did you work on? This ensures the “human element” is always present. You can use a tool like the FreeCV builder to structure your thoughts and content first.

2. Use AI for Targeted Assistance, Not Generation

Instead of “Write me a resume for a marketing manager,” try these types of prompts:

- “I led a project that increased customer retention by 15%. How can I phrase this into a strong, concise bullet point for a resume?”

- “Here is a job description for a ‘Data Analyst.’ What are the top 5 keywords I should ensure are prominently displayed in my resume?”

- “I need to write a professional summary for a software engineer with 5 years experience in Python and AWS. Here are my key achievements: [list achievements]. Draft a summary based on these.”

This is about surgical edits and keyword alignment, not wholesale creation. For more ideas, check out our guide on 15 ChatGPT prompts that work.

3. Customize for Each Role

This is absolutely crucial. Never send a generic AI-generated resume. Use AI to help you tailor your resume to each specific job description. Paste the job description and your base resume into an AI. Ask it: “Compare my resume to this job description. What skills or experiences should I emphasize more? How can I rephrase existing bullet points to better align with the requirements?” This helps you hit that 70-80% ATS match sweet spot.

4. Inject Your Voice and Personality

After AI has done its work, make it yours. Read every sentence aloud. Does it sound like you? Would you say these words in an interview? Replace generic AI phrasing with your own, even if it’s slightly less formal. Use specific anecdotes where appropriate. This is your chance to make it sound human. Remember that University of Maryland study. One round of human editing makes a huge difference.

5. The “30-Second Human Pass”

Before you hit submit, do a final, quick read-through. Put yourself in the shoes of a busy recruiter. Does it grab your attention? Is it easy to read? Are there any odd phrases that just don’t sound quite right? This quick pass is often enough to catch those subtle “AI patterns” that might otherwise slip through. Ask a friend or colleague to read it too. An external perspective is invaluable.

By following these steps, you’re not just passively letting AI write your career story. You’re actively directing it, leveraging its power to enhance your own message, ensuring it remains authentic, compelling, and ultimately, effective in landing you that interview.

The Future: What 2027 Hiring Will Look Like

Where is all of this heading? The landscape is constantly shifting, but some trends are already clear for 2027 and beyond. This question of “can employers tell if I used AI on my resume?” might not even be relevant in a few years, at least not in the same way.

The arms race between AI generation and AI detection is unsustainable. As generative AI gets better, so will its ability to mimic human writing. And as detection tools try to keep up, their false positive rates will likely continue to be an issue, unfairly penalizing good candidates.

Instead, the industry is moving towards more robust, verifiable methods of assessment. Here’s what we can expect:

- Skills-Based Hiring: Companies are increasingly focusing on demonstrable skills rather than just traditional degrees or years of experience. This means more skills tests, practical assessments, and portfolio reviews. Your resume will become less about perfectly worded bullet points and more about verifiable proof of ability.

- Verified Credentials & Digital Badges: Imagine a world where your education, certifications, and even specific project contributions are verified by third parties and presented as secure digital credentials. This moves beyond the self-reported nature of a resume.

- Behavioral & Situational Assessments: These are already common, but they’ll become even more prevalent. These tests assess how you think, problem-solve, and interact in work-like scenarios. AI can’t really fake genuine cognitive abilities or personality traits.

- Interview Focus: The interview will become even more critical. With the rise of AI-generated applications, recruiters will rely heavily on interviews to gauge authenticity, depth of experience, and cultural fit. If your resume says you “drove impactful outcomes,” be ready to explain exactly how, with specific examples and numbers.

- AI as a “Copilot” for Recruiters: While AI detection for applicants might wane, AI tools helping recruiters parse resumes, identify key skills, and even suggest interview questions will only grow. This means you’ll be interacting with AI on both sides of the hiring equation.

The resume keyword game, where you stuff your CV with every possible buzzword to beat the ATS, that’s slowly dying. It’s being replaced by a more holistic, and hopefully more equitable, approach to evaluating talent. The focus will shift from the prose on your resume to the actual skills and verifiable impact you bring to the table.

So, while it’s wise to be cautious about AI detection today, the bigger picture is that the very nature of hiring is evolving. The question won’t be “did AI write this?” but rather “can you prove this?” And that’s a much harder thing for any AI to fake, I think. Your genuine skills and experiences will always be your strongest assets. Invest in those, and you’ll be ready for whatever 2027 and beyond throws at you.